The internet is converging. Boris Müller, a Professor of Interaction Design at FH Potsdam, called it “the visual weariness of the web.” Jon Kolko’s research at Indiana University proved it empirically — over two million pairwise comparisons showing that websites have homogenized dramatically since 2007, with layout diversity declining the most. AI is accelerating this. Wharton researchers found that in 37 out of 45 comparisons, ideas generated with ChatGPT were significantly less diverse than those from other methods. Hamilton Mann coined the term “AI-formization” in Forbes to describe how algorithms lead to uniformity in artistic expression, cultural experiences, and creative content. The New Yorker reported that AI tools make our brains less active and our writing less original.

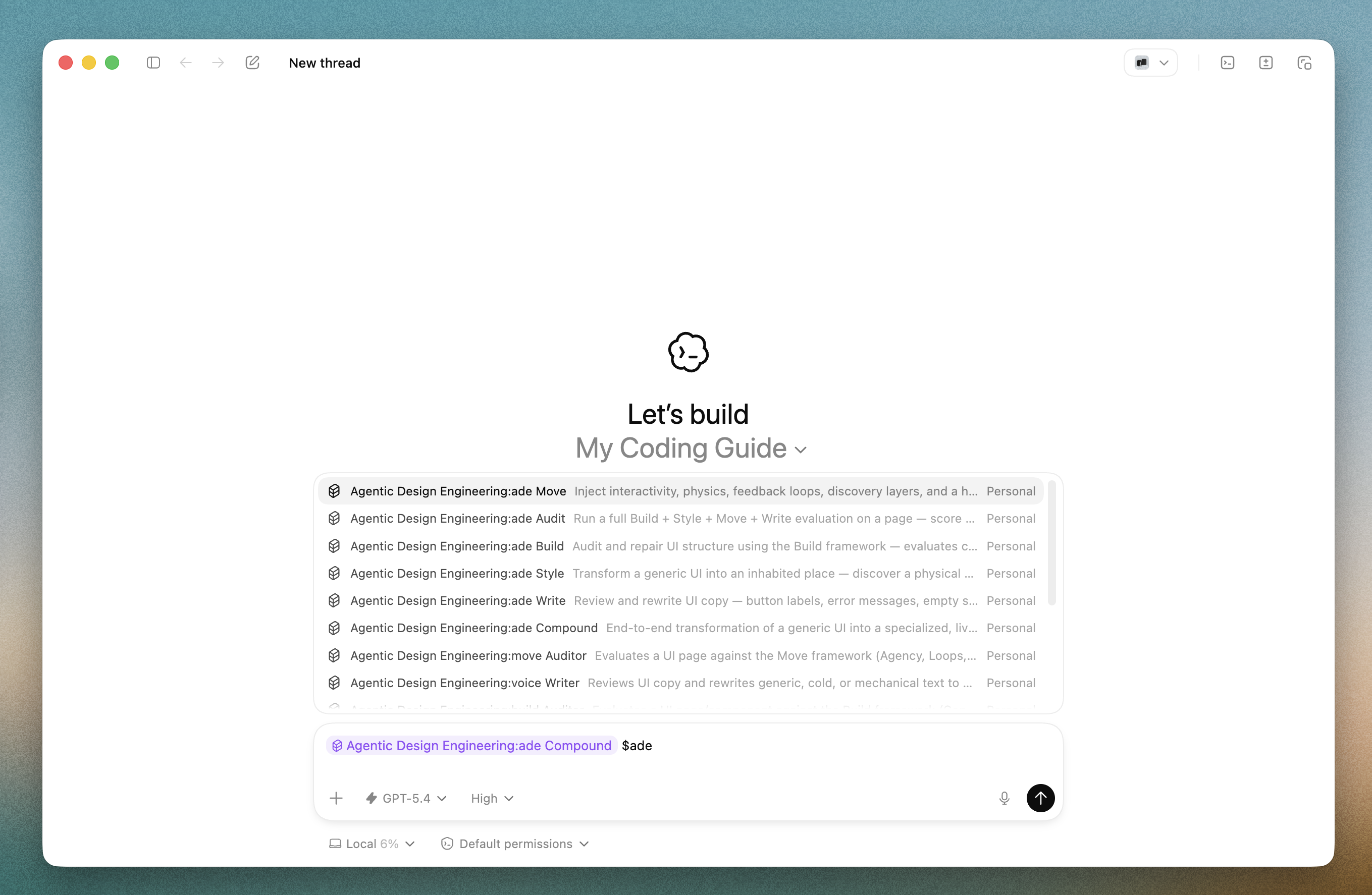

And then there’s what you see every day. Open any AI coding tool — Claude, ChatGPT, v0, Bolt — and ask it to build a landing page. You’ll get Inter font, Lucide icons, blue-to-purple gradients, rounded corners, and a hero-features-testimonials-CTA-footer layout. Every time. Reddit threads on r/Frontend and r/vibecoding are full of practitioners calling it out: every vibe-coded app looks the same. One commenter named the exact phenomenon — “institutional isomorphism.” Everything converges toward the statistical center of the training data. Competent, functional, forgettable.

I coined the term Agentic Design Engineering because I believe this sameness is not inevitable — it’s a design failure. As a designer from Parsons School of Design, uniform design felt deeply wrong. Design should be intentional, deliberate, and unique. It should communicate a feeling, an experience, a way to operate. Not a template with your logo slapped on top.

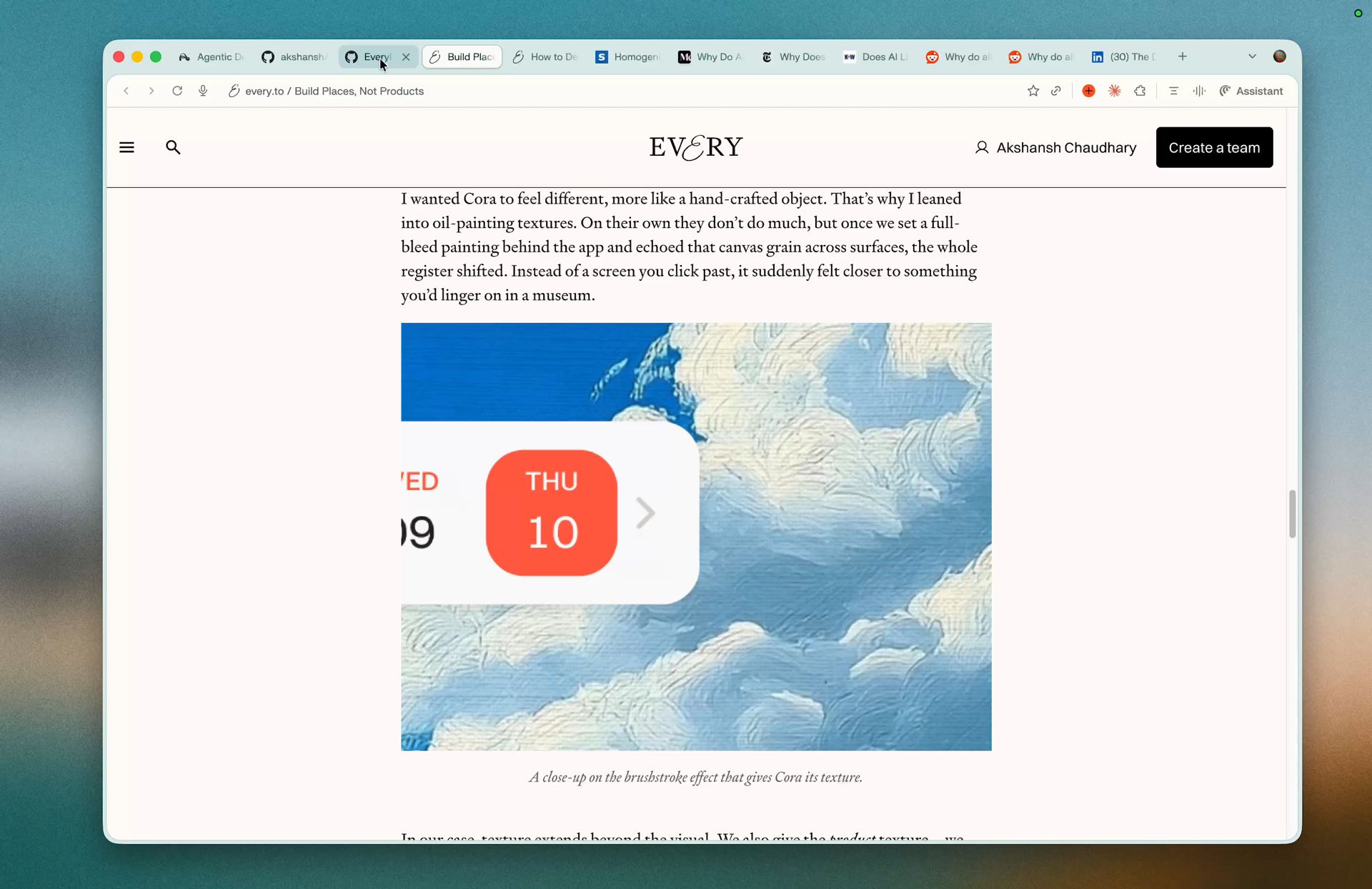

The inspiration came from two places. First, the exceptional design work by the team at Every — particularly their product behind Quora — which showed what it looks like when style is treated as a first-class concern. Second, Kieran’s Compound Engineering plugin, also from Every, which became the structural reference for how to package these ideas into something an agent could actually run. ADE is thinking about design from a designer’s lens, but with AI awareness — an agent doing the design for the code being engineered.

The Workflow

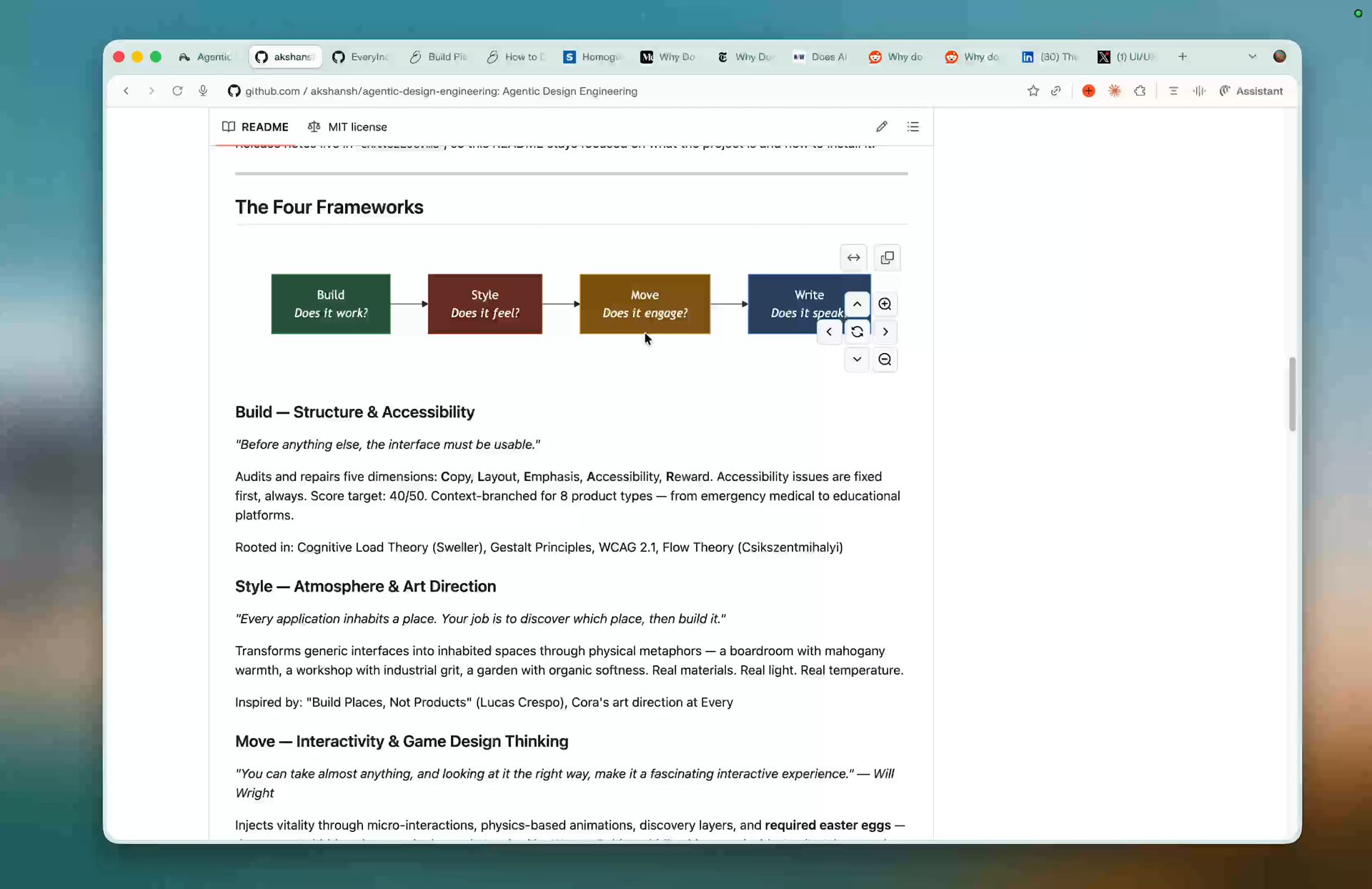

The system is four frameworks applied in sequence. Each one builds on the last. The order matters.

Build → Style → Move → Write

-

Build — Fix the structure. Audit and repair five dimensions: Copy, Layout, Emphasis, Accessibility, Reward. Accessibility issues are fixed first, always. Score target: 40/50. Rooted in cognitive psychology — Sweller’s Cognitive Load Theory, Gestalt Principles, WCAG 2.1.

-

Style — Set the atmosphere. Discover a physical metaphor for the product — a boardroom library, a workshop, a garden — then build CSS atmosphere layers from real materials, real light, real temperature. Five design iteration cycles per page. The first version is scaffolding, not design.

-

Move — Inject vitality. Map interaction dead spots, add physics-based animations, create discovery layers, and plant a hidden easter egg — the creator’s signature in the work. Rooted in Will Wright’s game design theory and Warren Robinett’s Adventure (1979).

-

Write — Polish the copy. Review and rewrite UI copy — button labels, error messages, empty states, loading text — to sound intentional, warm, and human. Not generic AI copy. Scope: UI copy only.

Every skill begins with Step 0: Understand — the agent builds a Product Portrait, grasping the domain, user persona, and emotional weight before evaluating or transforming anything. Build gates Style. Style gates Move. Move gates Write. Skip or reorder, and the chain breaks.

How It Ships

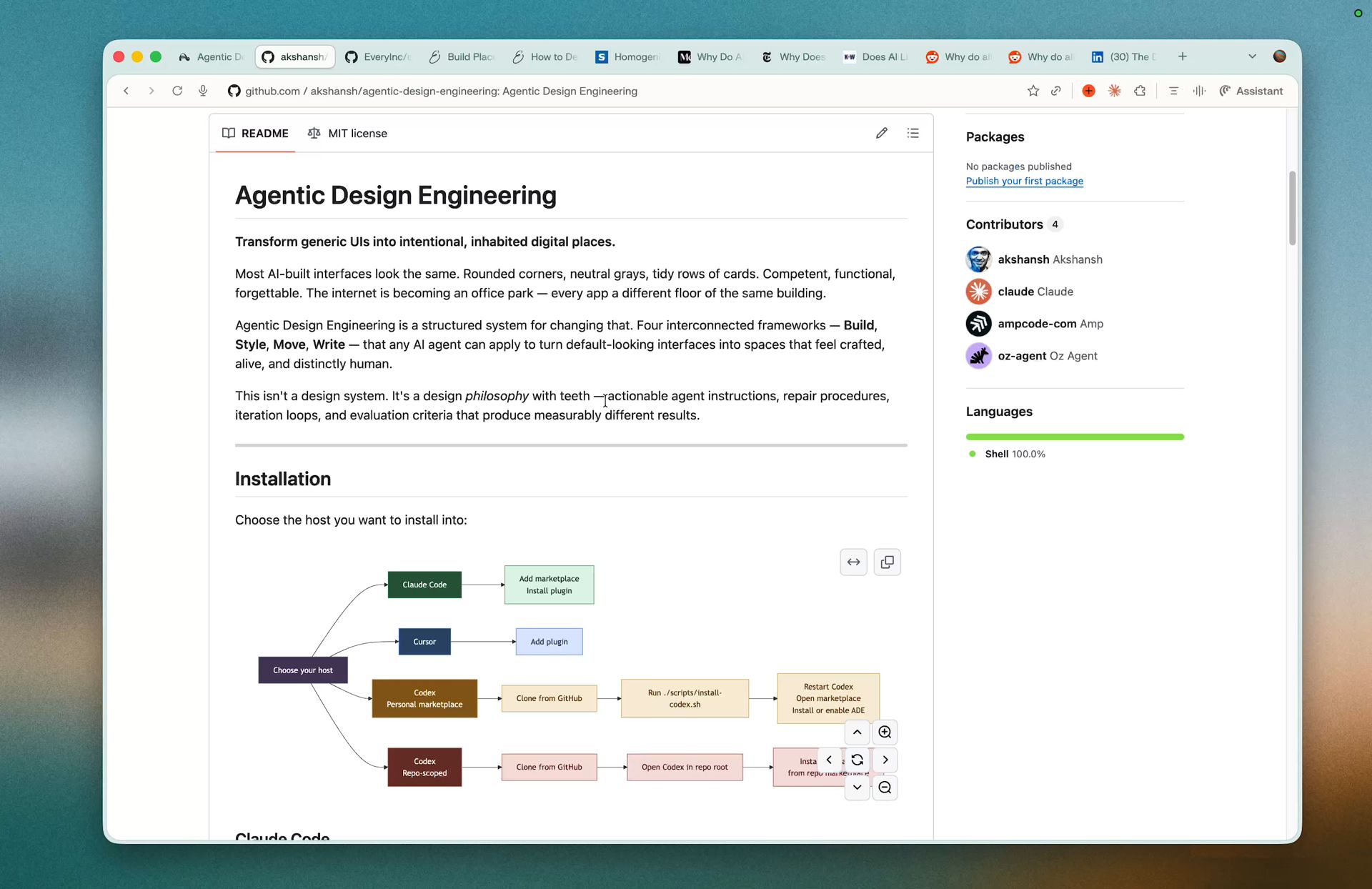

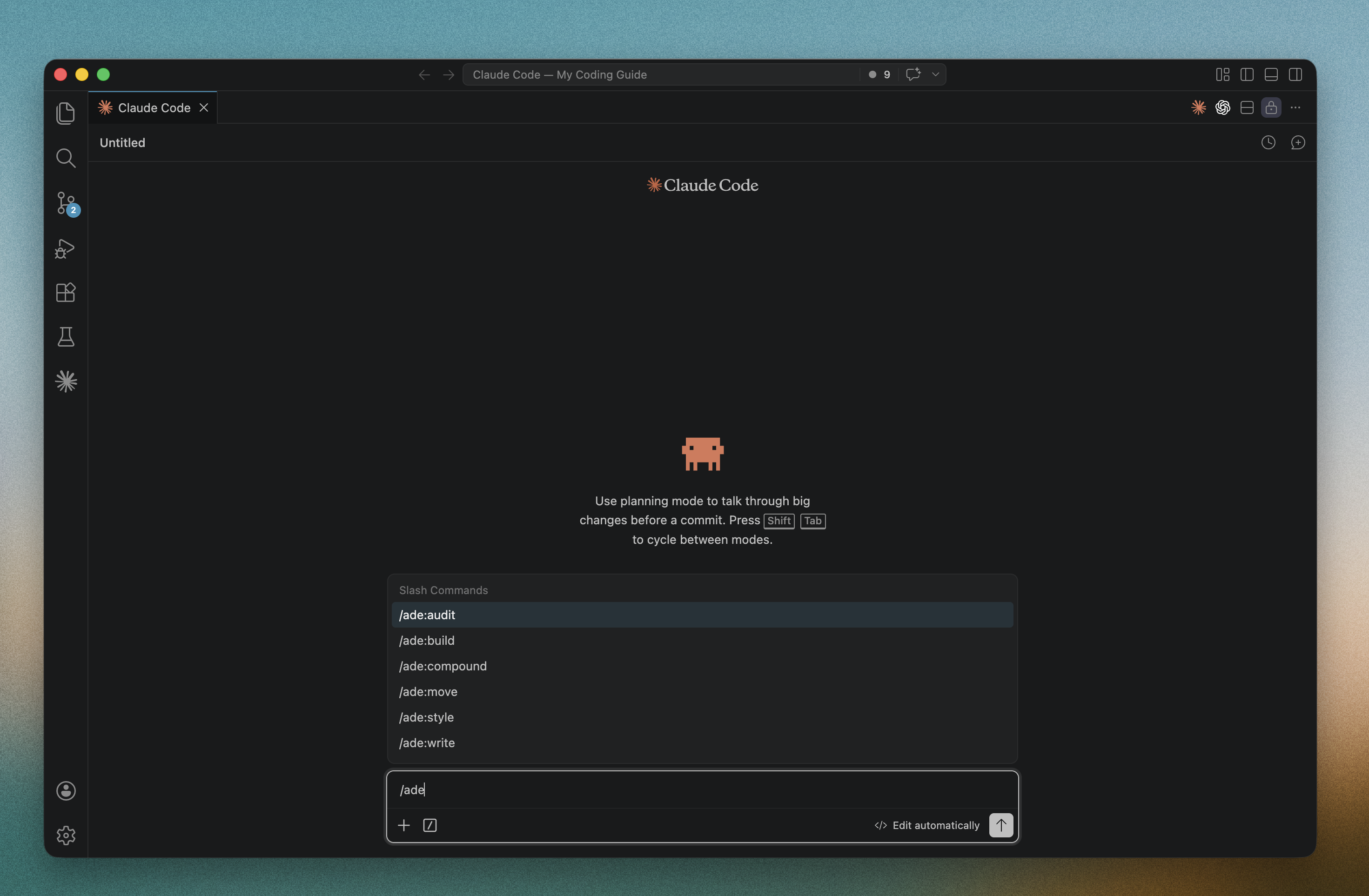

The plugin works across Claude Code, Cursor, and OpenAI Codex. Six commands: /ade:build, /ade:style, /ade:move, /ade:write, /ade:audit (score all four frameworks read-only), and /ade:compound (run the full pipeline end-to-end). Nine specialized agents power the skills — from the codebase-comprehender that builds the Product Portrait, to the metaphor-discoverer that suggests three ranked metaphors, to the vitality-injector that patches interaction dead spots.

Autonomous mode lets you say “surprise me” and the agent makes every decision independently — including metaphor selection via a 5-criteria scoring rubric. Before/after scoring with delta tables ensures improvement is measurable, not a matter of opinion. Structured cross-framework handoffs pass context between stages so nothing is lost.

The Origin

This started with a meeting archive. Venus Remedies had 94 management meetings spanning 6 years — institutional memory trapped in PDFs. The first version I built worked perfectly. And felt like nothing. So I asked: what if this login page wasn’t a form — but a heavy wooden door to a private boardroom? What if meeting cards weren’t UI components — but leather-bound ledgers on a dark shelf? Five iterations later, the app had mahogany warmth, brass accents, candlelight, and a keyhole ornament on the login page. It felt like entering institutional memory. Not viewing it.

The frameworks I built to get there became Agentic Design Engineering.

It’s open source under MIT because knowledge hoarded is knowledge wasted. The counter-movement to AI sameness — what designer Ioana Teleanu describes as a culture responding “not with cleaner lines but with friction, texture, glitch, and nostalgia” — needs tools, not just manifestos. This is one of those tools. Build places, not products.